Software Projects

This section contains personal and professional side projects that reflect my interests in scientific computing, machine learning, automation, and software engineering.

While many of my professional systems were developed within research projects and are not publicly available, these projects showcase the tools, technologies, and approaches I use when designing software.

I focus on operational systems for geospatial forecasting, where physics-based models, data-assimilation workflows, and vision-inspired deep learning methods are combined to balance accuracy, speed, and scalability.

Public code currently available: https://git.ferja.eu/users/jmfernan/projects

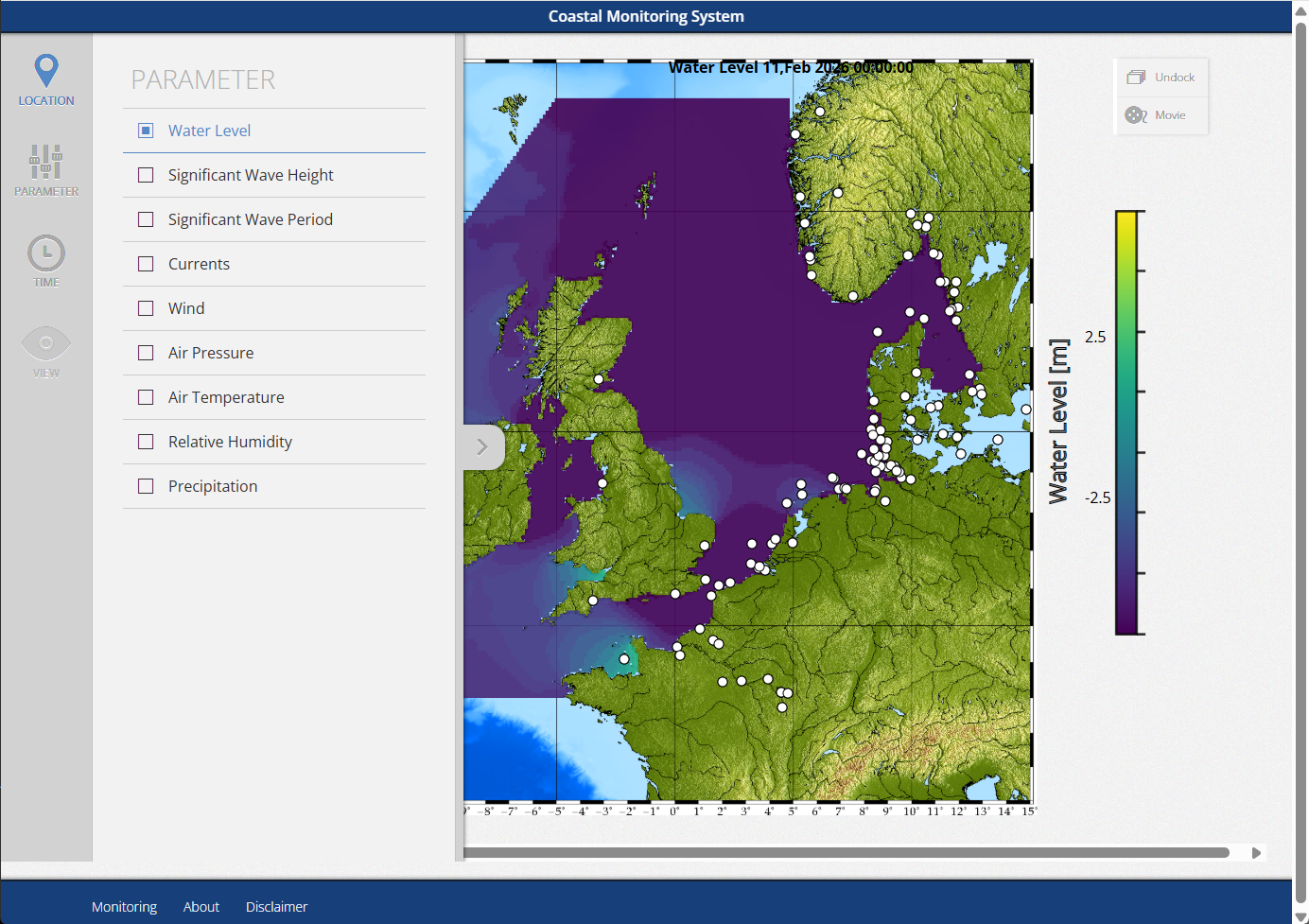

Operational System North Sea

https://operational.gnuwater.com/northsea

This application is a real-time operational decision-support platform for water-level and coastal conditions. It integrates data ingestion, observation processing, and web delivery into a production-ready system. It automates forecasting and monitoring workflows, including real-time measurement normalization from heterogeneous feeds and daily-block ingestion of meteorological model outputs, enabling continuous operational assessment and publishing of actionable insights.

This application is a real-time operational decision-support platform for water-level and coastal conditions. It integrates data ingestion, observation processing, and web delivery into a production-ready system. It automates forecasting and monitoring workflows, including real-time measurement normalization from heterogeneous feeds and daily-block ingestion of meteorological model outputs, enabling continuous operational assessment and publishing of actionable insights.

Technically, it combines Python-based processing pipelines, containerized services, and database-backed web delivery. It supports grid and bathymetry handling, observation management, external model ingestion (including GFS), and efficient daily model batching. The architecture is orchestrated via compose.yaml and reinforced by quality gates (Black, mypy, pylint), demonstrating maintainability, reliability, and production focus.

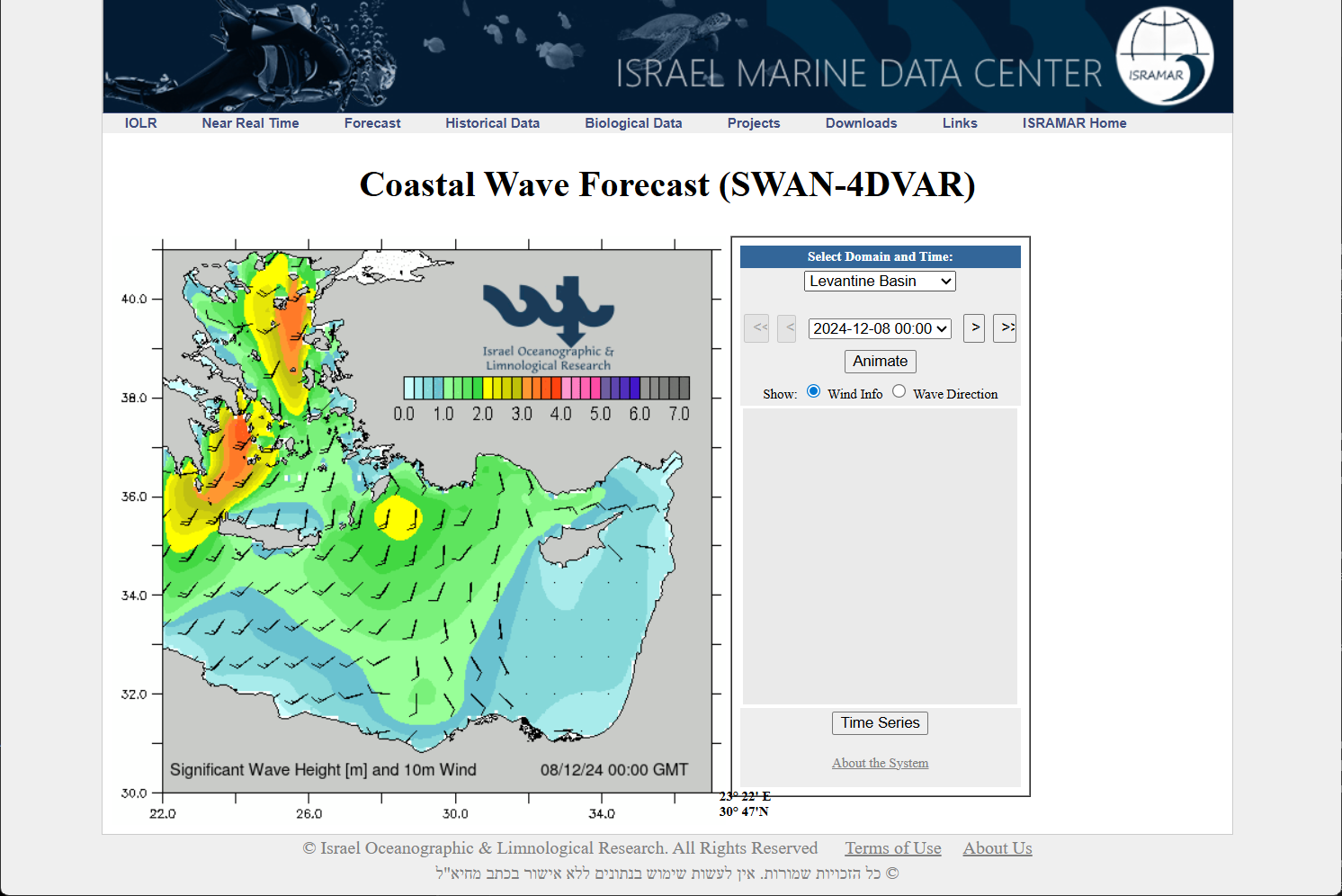

Coastal Wave Forecast (SWAN-4DVAR)

Online prediction

This module implements a full data-assimilation workflow based on 4DVar to improve coastal wave forecasts. It combines near-real-time station observations and Copernicus data with SWAN simulations to estimate optimal wind-field corrections over a 24-hour analysis window. The resulting analysis is used as initialization for operational forecasts. A Python orchestration layer automates simulation execution, optimization loops, and data handling.

This module implements a full data-assimilation workflow based on 4DVar to improve coastal wave forecasts. It combines near-real-time station observations and Copernicus data with SWAN simulations to estimate optimal wind-field corrections over a 24-hour analysis window. The resulting analysis is used as initialization for operational forecasts. A Python orchestration layer automates simulation execution, optimization loops, and data handling.

Among all developed approaches, this module provides the highest forecast accuracy and best agreement with observations, especially during storms and short-term events. The trade-off is high computational cost due to multiple parallel SWAN runs and iterative optimization.

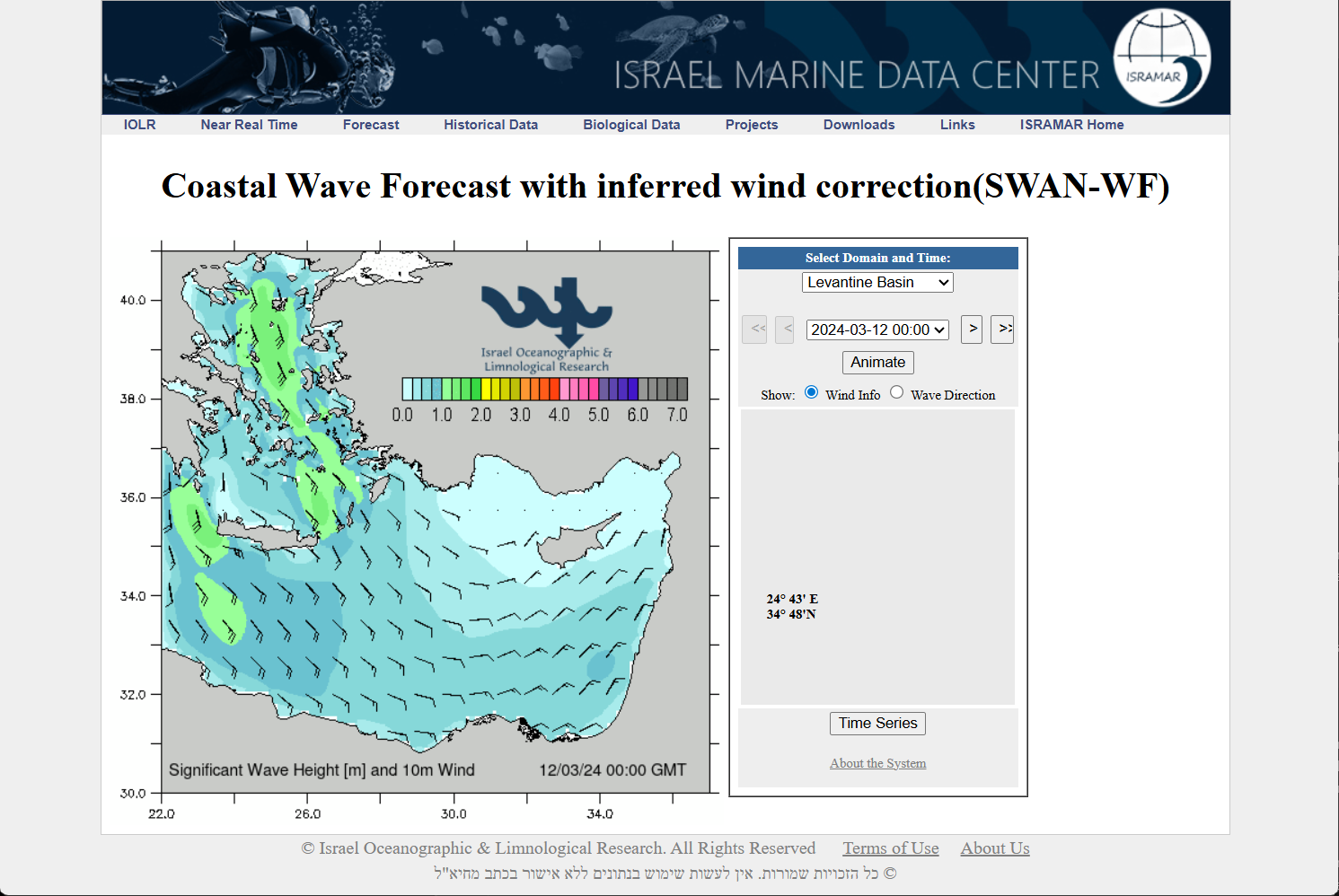

Coastal Wave Forecast with Neural Network Wind Correction (SWAN-WF)

Online prediction

This module integrates machine learning into a physics-based workflow by predicting a wind-correction factor used as SWAN forcing. During the assimilation phase, optimal wind amplification factors are derived with 4DVar. A neural network is then trained to emulate those corrections directly from meteorological inputs, enabling faster operational runs without executing the full assimilation process each cycle.

This module integrates machine learning into a physics-based workflow by predicting a wind-correction factor used as SWAN forcing. During the assimilation phase, optimal wind amplification factors are derived with 4DVar. A neural network is then trained to emulate those corrections directly from meteorological inputs, enabling faster operational runs without executing the full assimilation process each cycle.

Relative to traditional forecasting, this hybrid strategy improves storm behavior and reduces systematic bias while preserving SWAN’s physical consistency. It offers a balanced compromise between accuracy, interpretability, and computational efficiency.

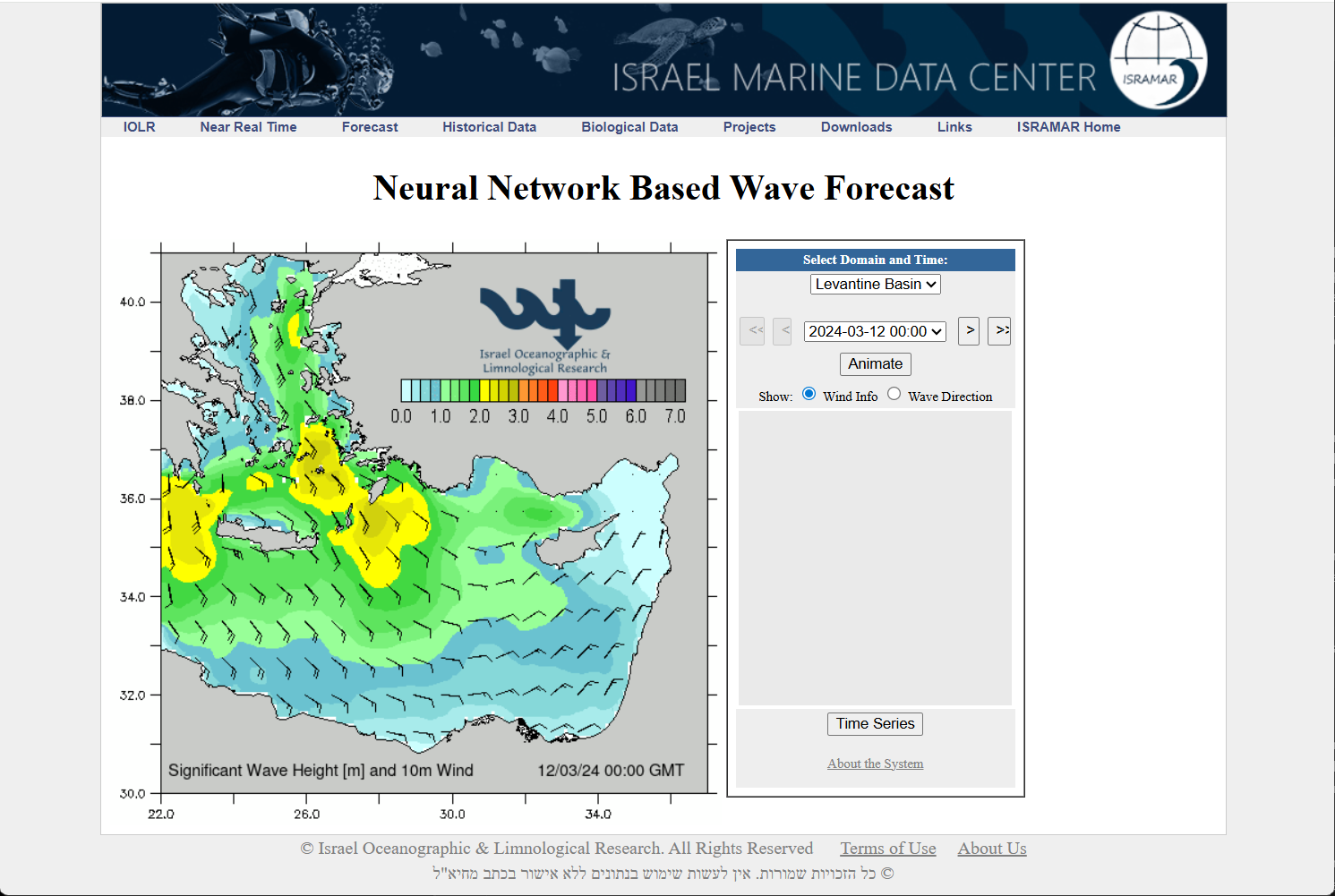

Neural Wave Forecasting with Vision-Inspired Deep Learning (DCNN Wave Model)

Online prediction

DARTIS project

This project delivers an end-to-end deep learning system for online wave forecasting, predicting 2D fields of significant wave height directly from meteorological forecasts. The core idea is to treat atmospheric and ocean fields as image-like spatial grids and learn patterns with convolutional neural networks (CNNs), similar to computer vision pipelines.

This project delivers an end-to-end deep learning system for online wave forecasting, predicting 2D fields of significant wave height directly from meteorological forecasts. The core idea is to treat atmospheric and ocean fields as image-like spatial grids and learn patterns with convolutional neural networks (CNNs), similar to computer vision pipelines.

The architecture combines convolutional autoencoders for wind, pressure, and wave fields; a latent-space mapping from encoded meteorology to encoded wave states; and a decoder that reconstructs full wave-height maps. Trained on historical SWAN and SKIRON outputs with assimilation products as targets, it reproduces key dynamics while compressing fields by up to 25x.

Operationally, it can generate a 24-hour forecast in seconds on a single CPU core. Compared with full assimilation, it provides much lower latency and excellent performance-to-cost ratio, at intermediate accuracy because it does not directly ingest live observations.

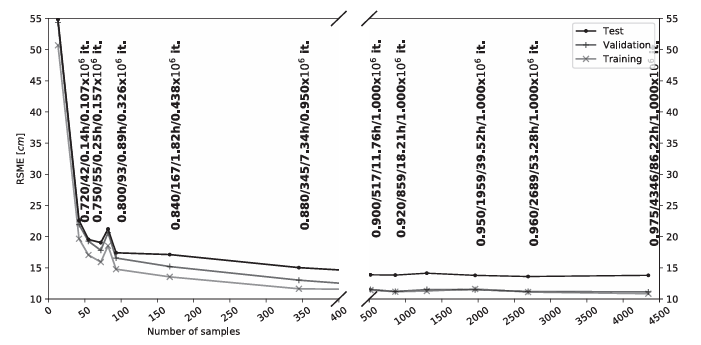

ADSS: Angular Distance Sample Selection for Efficient Training

Repository

Paper

ADSS is a data-efficiency method designed to reduce training cost by selecting a compact but informative subset from large datasets.

Although originally presented for tidal time-series workflows, the same principle transfers well to image-based AI pipelines: instead of training on all frames/images, ADSS prioritizes representative and high-value samples, reducing redundancy while preserving predictive signal.

ADSS is a data-efficiency method designed to reduce training cost by selecting a compact but informative subset from large datasets.

Although originally presented for tidal time-series workflows, the same principle transfers well to image-based AI pipelines: instead of training on all frames/images, ADSS prioritizes representative and high-value samples, reducing redundancy while preserving predictive signal.

In practice, this enables faster experimentation, lower compute requirements, and more scalable retraining cycles.

As a portfolio component, it highlights methodological depth beyond model architecture: not only building models, but also optimizing the data pipeline that controls training quality, speed, and operational feasibility.

Target Face Detection and Identification (Real-Time + Batch)

git.ferja.eu/jmfernan/face_recognition

This project implements a video-based target-face identification pipeline using MTCNN for face detection and FaceNet embeddings for identity matching.

A target profile is built from reference images, and each detected face is classified as target or non-target using cosine similarity.

This project implements a video-based target-face identification pipeline using MTCNN for face detection and FaceNet embeddings for identity matching.

A target profile is built from reference images, and each detected face is classified as target or non-target using cosine similarity.

The system supports both batch processing (with positive/negative frame export) and live playback, including multi-person scenes where one face is correctly identified as the target while others are rejected as non-targets.

The pipeline also integrates ADSS (Angular Distance Sample Selection) to reduce redundant video frames and prioritize high-value candidate samples for labeling and retraining.

This improves data efficiency and significantly reduces training and retraining time, while real-time inference performance is handled by the face detection and embedding pipeline.

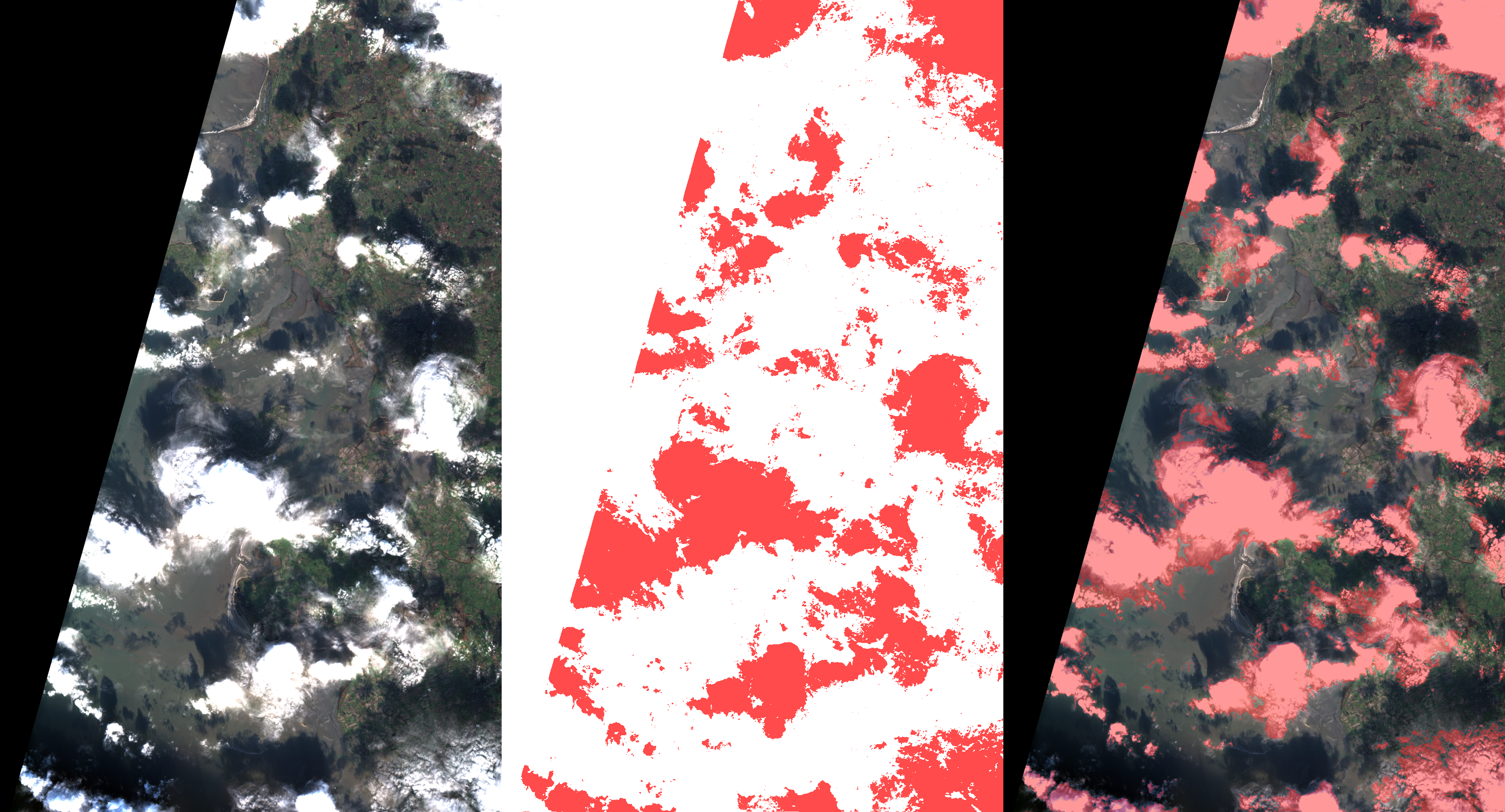

Sentinel Pixel Lab

git.ferja.eu/jmfernan/sentinel_pixel_lab

This is a satellite-image analytics application for Sentinel-2 data discovery, download, and pixel-level classification. It queries STAC-compatible catalogs, filters by spatial and temporal bounds, and retrieves spectral assets (coastal, blue, green, red, NIR, SWIR). It includes practical annotation using color masks (green for positive pixels, red for negative), enabling non-ML specialists to produce training labels.

This is a satellite-image analytics application for Sentinel-2 data discovery, download, and pixel-level classification. It queries STAC-compatible catalogs, filters by spatial and temporal bounds, and retrieves spectral assets (coastal, blue, green, red, NIR, SWIR). It includes practical annotation using color masks (green for positive pixels, red for negative), enabling non-ML specialists to produce training labels.

The classification engine uses supervised learning over stacked spectral-band features (MLP-based pixel classifier) and generates binary rasters plus visual overlays for QA. Compared with heavier segmentation stacks, it is lightweight, reproducible, and efficient for iterative geospatial workflows with real training and inference capability.

Automonitoring System

git.ferja.eu/jmfernan/automonitoring

This application automates end-to-end Delft3D model setup from compact configuration inputs, generating pre-processing artifacts for hydrodynamic and wave simulations. It replaces labor-intensive manual workflows for grid creation, bathymetry interpolation, boundary and initial conditions, meteorological forcing preparation, and control-file wiring while preserving reproducibility.

It supports multi-domain workflows, including nested meshes and tiled m x n decomposition layouts with interface-coherent metadata. The pipeline is modular and deterministic, with clear responsibility boundaries and validation-oriented execution. It can ingest GEBCO-type bathymetry, HYCOM, TPXO/pyTMD, WAVEWATCH3 pathways, and GFS/ERA5-CDS forcing. Outputs are execution-ready model packages with lower setup time and reduced configuration risk.

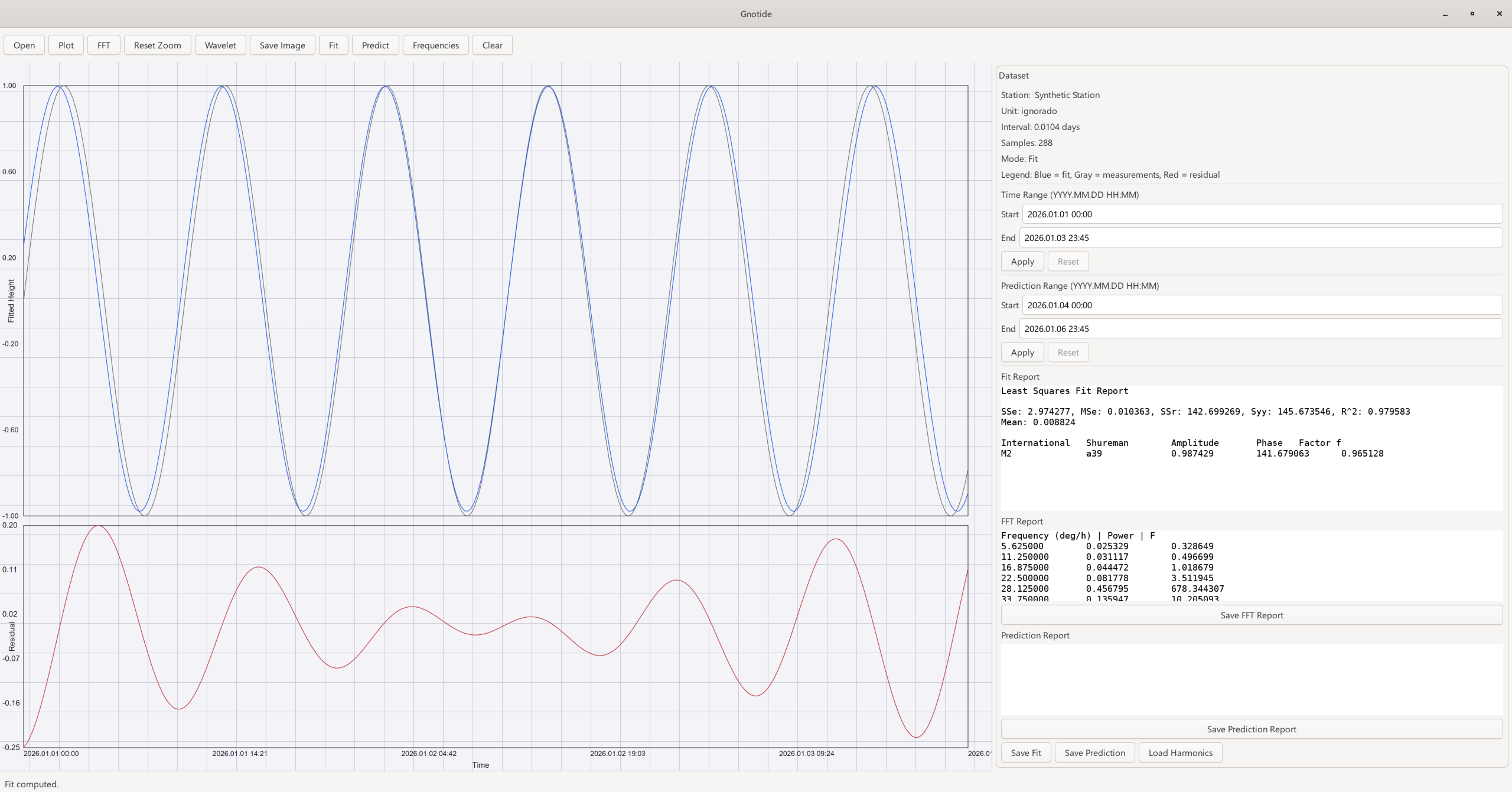

Gnotide

git.ferja.eu/jmfernan/gnotide4

Gnotide is a scientific application for tidal time-series analysis, covering the workflow from station data ingestion to spectral interpretation and prediction. It combines FFT, wavelet analysis, and least-squares harmonic fitting to identify dominant constituents, characterize non-stationary behavior, and produce reproducible tide predictions and technical reports.

Gnotide is a scientific application for tidal time-series analysis, covering the workflow from station data ingestion to spectral interpretation and prediction. It combines FFT, wavelet analysis, and least-squares harmonic fitting to identify dominant constituents, characterize non-stationary behavior, and produce reproducible tide predictions and technical reports.

From a software engineering perspective, it is a modernized C + GTK4 codebase with strong quality gates: layered testing (unit, golden numerical regression, UI flow/static), cross-platform CI/CD (Linux/Windows), static analysis, and coverage enforcement. The architecture separates deterministic numerical modules from visualization and interaction logic, supporting safe refactoring and long-term maintainability.

Web page

This project delivers a configurable, content-driven website where pages are served from static HTML and rendered through a consistent layout with navigation, header/footer configuration, and member-only areas. It supports dynamic content discovery, hot reload, and an admin reload endpoint, together with security features such as headers, CSRF protection, session rotation, rate limiting, sanitization, and obfuscated contact tags.

This project delivers a configurable, content-driven website where pages are served from static HTML and rendered through a consistent layout with navigation, header/footer configuration, and member-only areas. It supports dynamic content discovery, hot reload, and an admin reload endpoint, together with security features such as headers, CSRF protection, session rotation, rate limiting, sanitization, and obfuscated contact tags.

Technically, it demonstrates separation of concerns (config, services, routes, templates), coverage around security and content behavior, and deployment-ready practices (environment-driven config, optional Redis backing, container support). The architecture is intentionally lean for low operational complexity and can scale through Redis-backed sessions/rate limiting, reverse-proxy caching, and horizontal app instances.